Tendency, Handy Errors, and the Significance of Bodily Reasoning.

by Pat Frank

Final February 7, statistician Richard Sales space, Ph.D. (hereinafter, Wealthy) posted a really lengthy critique titled, What do you imply by “imply”: an essay on black containers, emulators, and uncertainty” which may be very crucial of the GCM air temperature projection emulator in my paper. He was additionally very crucial of the notion of predictive uncertainty itself.

This put up critically assesses his criticism.

An apart earlier than the principle subject. In his critique, Wealthy made lots of the similar errors in bodily error evaluation as do local weather modelers. I’ve described the incompetence of that guild at WUWT right here and right here.

Wealthy and local weather modelers each describe the likelihood distribution of the output of a mannequin of unknown bodily competence and accuracy, as being equivalent to bodily error and predictive reliability.

Their view is improper.

Unknown bodily competence and accuracy describes the present state of local weather fashions (no less than till just lately. See additionally Anagnostopoulos, et al. (2010), Lindzen & Choi (2011), Zanchettin, et al., (2017), and Loehle, (2018)).

GCM local weather hindcasts aren’t checks of accuracy, as a result of GCMs are tuned to breed hindcast targets. For instance, right here, right here, and right here. Checks of GCMs in opposition to a previous local weather that they have been tuned to breed isn’t any indication of bodily competence.

When a mannequin is of unknown competence in bodily accuracy, the statistical dispersion of its projective output can’t be a measure of bodily error or of predictive reliability.

Ignorance of this downside entails the very fundamental scientific mistake that local weather modelers evidently strongly embrace and that seems repeatedly in Wealthy’s essay. It reduces each modern local weather modeling and Wealthy’s essay to scientific emptiness.

The correspondence of Wealthy’s work with that of local weather modelers reiterates one thing I noticed after a lot immersion in printed climatology literature — that local weather modeling is an train in statistical hypothesis. Papers on local weather modeling are virtually fully statistical conjectures. Local weather modeling performs with bodily parameters however is just not a department of physics.

I consider this circumstance refutes the American Statistical Society’s assertion that extra statisticians ought to enter climatology. Climatology doesn’t want extra statisticians as a result of it already has far too many: the local weather modelers who fake at science. Consensus climatologists play at scienceness and might’t discern the distinction between that and the actual factor.

Climatology wants extra scientists. Proof suggests lots of the good ones beforehand resident have been precipitated to flee.

Wealthy’s essay ran to 16 typescript pages and practically 7000 phrases. My reply is even longer — 28 pages and practically 9000 phrases. Adopted by an 1800-word Appendix.

For these disinclined to undergo the Full Tilt Boogie under, here’s a brief summary adopted by an extended abstract.

The very brief take-home message: Wealthy’s complete evaluation has no crucial pressure.

A abstract checklist of its issues:

1. Wealthy’s evaluation exhibits no proof of bodily reasoning.

2. His proposed emulator is constitutively inapt and tendentious.

three. Its derivation is mathematically incoherent.

Four. The derivation is dimensionally unsound, abuses operator algebra, and deploys unjustified assumptions.

5. Offsetting calibration errors are incorrectly and invariably claimed to advertise predictive reliability.

6. The Stefan-Boltzmann equation is inverted.

7. Operators are improperly handled as coefficients.

Eight. Accuracy is repeatedly abused and ejected in favor of precision.

9. The GCM air temperature projection emulator (paper eqn. 1) is fatally confused with the error propagator (paper eqn. 5.2)

10. The analytical focus of my paper is fatally misconstrued to be mannequin means.

11. The GCM air temperature projection emulator is wrongly described as used to suit GCM air temperature means.

12. The identical emulator is falsely portrayed as unable to emulate GCM projection variability, regardless of 68 examples on the contrary.

13. A double irony is that Wealthy touted a superior emulator with out ever displaying a single profitable emulation of a GCM air temperature projection.

14. Assumed away all of the difficulties of measurement error or mannequin error (qualifying Wealthy to be a consensus climatologist).

15. Uncertainty statistics are wrongly and invariably asserted to be bodily error or an interval of bodily error.

16. Systematic error is falsely asserted as restricted to a hard and fast fixed bias offset.

17. Uncertainty in temperature is falsely and invariably construed to be an precise bodily temperature.

18. Empirically unjustified invariably advert hoc assumptions of error as a random variable.

19. The JCGM description of normal uncertainty variance is self-advantageously misconstrued.

20. The described use of rulers or thermometers is unrealistic.

21. Readers are suggested to report and settle for false precision.

A few preliminary situations that spotlight the distinction between statistical considering and bodily reasoning.

Wealthy wrote that, “It might be objected that actuality is just not statistical, as a result of it has a selected measured worth. However that’s solely true after the very fact, or as they are saying within the commerce, a posteriori. Beforehand, a priori, actuality is a statistical distribution of a random variable, whether or not the amount be the touchdown face of the die I’m about to throw or the worldwide HadCRUT4 anomaly averaged throughout 2020.”

Wealthy’s description of an a priori random variable standing for some as-yet unmeasured state is improper when the state of curiosity, although itself unknown, falls inside a regime handled by bodily concept, comparable to air temperature. Then the a priori which means is just not the statistical distribution of a random variable, however quite the unknown state of a deterministic system that features uncontrolled however explicable bodily results.

Wealthy’s remark implied new facet of bodily actuality is approached inductively, with none prior explanatory context. Science approaches a brand new facet of bodily actuality deductively from a pre-existent bodily concept. The prior explanatory context is at all times current. This inductive/deductive distinction marks a elementary departure in modes of considering. The primary neither acknowledges nor employs bodily reasoning. The second does each.

Wealthy additionally wrote, “It might even be objected that many black containers, for instance International Circulation Fashions, aren’t statistical, as a result of they observe a time evolution with deterministic bodily equations. Nonetheless, the evolution will depend on the preliminary state, and since local weather is famously “chaotic”, tiny perturbations to that state, result in sizeable divergence later. The chaotic system tends to revolve round a small variety of attractors, and the breadth of orbits round every attractor might be studied by laptop and matched to statistical distributions.”

However this isn’t recognized to be true. On the one hand, an enough bodily concept of the local weather is just not out there. This lack leaves GCMs as parameterized engineering fashions. They’re succesful solely of statistical arrays of outputs. Arguing the centrality of statistics to local weather fashions as a matter of precept begs the query of concept.

Alternatively, supposing a small variety of attractors flies into the face of the recognized massive variety of disparate local weather states spanning all the variation between “snowball Earth” and sizzling home Earth. And supposing these states might be studied by laptop and expressed as statistical distributions once more begs the query of bodily concept. Plenty of hand-waving, in different phrases.

Wealthy went on to put in writing that the issue of local weather may very well be approached as “a likelihood distribution of a steady actual variable.” However this assumes the conduct of the bodily system as easily steady. The numerous Dansgaard- Oeschger and Heinrich occasions are abrupt and discontinuous shifts of the terrestrial local weather.

None of Wealthy’s statistical conjectures are constrained by recognized physics or by the conduct of bodily actuality. In different phrases, they show no proof of bodily reasoning.

The Full-Tilt Boogie.

In his Part B, Wealthy arrange his evaluation by defining three sources of end result:

1. bodily actuality ® X(t) (information)

2. black field mannequin ® M(t) (simulation of the X(t)-producing bodily actuality)

three. mannequin emulator ® W(t) (emulation of mannequin M output)

I. Issues with “Black Field and Emulator Idea” Part B:

Wealthy’s mannequin emulator W consists to, “estimate of the previous black field values and to foretell the black field output.” That’s, his emulator targets mannequin output. It doesn’t emulate the interior conduct or workings of the complete mannequin in some easier means.

Its formal construction is given by his first equation:

W(t) = (1-a)W(t-1) + R₁(t) + R₂(t) + (-r)R₃(t), (1ʀ)

the place W(t-1) is a few preliminary worth and W(t) is the ultimate worth after integer time-step ‘t.’ The equation quantity subscript “ʀ” designates Wealthy because the supply.

As an apart right here, it’s not unfair to note that regardless of its many manifestations and modalities, Wealthy’s superior GCM emulator is rarely as soon as used to truly emulate an air temperature projection.

The eqn. 1ʀ emulator manifests persistence, which the GCM projection emulator in my paper doesn’t. Wealthy started his evaluation, then, with an analogical inconformity.

The elements in eqn. 1ʀ are described as: “R1(t) is to be the part which represents adjustments in main causal influences, such because the solar and carbon dioxide. R2(t) is to be a part which represents a robust contribution with observably excessive variance, for instance the Longwave Cloud Forcing (LCF). … R3(t) is a putative part which is negatively correlated with R2(t) with coefficient -r, with the potential (depending on precise parameters) to mitigate the excessive variance of R2(t).”

Emulator coefficient ‘r’ is at all times unfavourable. The R₃(t) itself is negatively correlated with R₂(t) in order that R₃(t) offsets (reduces) the magnitude of R₂(t), and Zero £ a £ 1. The Rn(t) are outlined as time-dependent random variables that add into (1-a)W(t-1).

The relative influence of every Rn on W(t-1) is R₁(t) > R₂(t) ³ |rR₃(t)|.

An issue with issue R₃(t):

The R₃(t) is given to be “negatively correlated” with R₂(t), “to mitigate the excessive variance of R₂(t).” Nonetheless, issue R₃(t) can be multiplied by coefficient -r.

“Negatively correlated” refers to R₃(t). The ‘-r’ is an extra and separate conditional.

There are three instances governing the which means of ‘unfavourable correlation’ for R₃(t).

1) R₃(t) begins at zero and turns into more and more unfavourable as R₂(t) turns into more and more optimistic.

or

2) R₃(t) begins optimistic and turns into smaller as R₂(t) turns into massive, however stays larger than zero.

or

three) R₃(t) begins optimistic and turns into small as R₂(t) turns into massive however can go by way of zero into unfavourable values.

If 1), then -rR₃(t) is optimistic and has the invariable impact of accelerating R₂(t) — the alternative of what was supposed.

If 2), then -rR₃(t) has a diminishing impact on R₂(t) as R₂(t) turns into bigger — once more reverse the specified impact.

If three), then -rR₃(t) diminishes R₂(t) at low however growing values of R₂(t), however will increase R₂(t) as R₂(t) turns into massive and R₃(t) passes into unfavourable values. It is because -r(-R₃(t)) = rR₃(t). That’s, the impact of R₃(t) on R₂(t) is concave upwards round zero, è₀ø.

That’s, not one of the mixtures of -r and negatively correlated R₃(t) has the specified impact on R₂(t). A constantly diminishing impact on R₂(t) is annoyed.

With unfavourable coefficient -r, the R₃(t) time period have to be larger than zero and positively correlated with R₂(t) to decrease the contribution of R₂(t) at excessive values.

Curiously, Wealthy didn’t designate what X(t) truly is (maybe air temperature?).

Nor did he describe what course of the mannequin M(t) simulates, nor what the emulator W(t) emulates. Wealthy’s emulator equation (1ʀ) is due to this fact utterly arbitrary. It’s merely a proper assemble that he likes, however is missing any topical relevance or analytical focus.

In strict distinction, my curiosity in emulation of GCMs was roused after I found in 2006 that GCM air temperature projections are linear extrapolations of GHG forcing. In December 2006, John A publicly posted that discovering at Steve McIntyre’s Local weather Audit web site, right here.

That’s, I started my work after discovering proof in regards to the conduct of GCMs. Wealthy, alternatively, launched his work after seeing my work after which inventing an emulator formalism with none empirical referent.

Lack of focus or relevance makes Wealthy’s emulator irrelevant to the GCM air temperature emulator in my paper, which was derived with direct reference to the noticed conduct of GCMs.

I’ll present that the irrelevance stays true even after Wealthy, in his Part D, added my numbers to his invented emulator.

A Diversion into Dimensional Evaluation:

Emulator 1ʀ is a sum. If, for instance, W(t) represents one worth of an emulated air temperature projection, then the items of W(t) have to be, e.g., Celsius (C). Likewise, then, the scale of W(t-1), R₁(t), R₂(t), and -rR₃(t), should all be in items of C. Coefficients a and r have to be dimensionless.

In his exposition, Wealthy designated his system as a time collection, with t = time. Nonetheless, his utilization of ‘t’ is just not uniform, and most frequently designates the integer step of the collection. For instance, ‘t’ is an integer in W(t-1) in equation 1ʀ, the place it represents the time step previous to W(t).

Persevering with:

From eqn. (1ʀ), for a time collection i = 1®t and when W(t-1) = W(Zero) = fixed, Wealthy introduced his emulator generalization as:

![]() (2ʀ)

(2ʀ)

Let’s see if that’s right. From eqn. 1ʀ:

W(t1) = (1-a)W(Zero) + R₁(t1) + R₂(t1) -rR₃(t1), 1ʀ1

the place the subscript on t signifies the integer step quantity.

W(t2) = (1-a)W(t1) + R₁(t2) + R₂(t2) -rR₃(t2) 1ʀ2

Substituting W(t1) into W(t2),

W(t2) = (1-a)[(1-a)W(0)+ R₁(t1) + R₂(t1) – rR₃(t1)] +[R₁(t2) + R₂(t2)-rR₃(t2)]

= (1-a)2W(Zero)+(1-a)[R₁(t1) + R₂(t1) – rR₃(t1)] + (1-a)⁰[R₁(t2)) + R₂(t2) -rR₃(t2)]

(NB: (1-a)⁰ = 1, and is added for completion)

Likewise, W(t3)=(1-a)[(1-a)2W(0)+(1-a)[R₁(t1)+R2(t1)-rR3(t1)]+[R1(t2)+R2(t2)-rR₃(t2)] + (1-a)⁰[R₁(t3) + R₂(t3) -rR₃(t3)]

= (1-a)3W(Zero)+(1-a)2[(R₁(t1)+R2(t1)-rR3(t1)]+(1-a)[R1(t2)+R2(t2)-rR₃(t2)]+(1-a)⁰[R₁(t3)+R₂(t3)-rR₃(t3)]

Generalizing:

![]()

![]() (1)

(1)

Evaluate eqn. (1) to eqn. (2ʀ). They don’t seem to be equivalent.

In generalized equation 1, when i = t = 1, W(tt) goes to W(t₁) = (1-a)W(Zero) + R₁(t1) + R₂(t1) -rR₃(t1) because it ought to do.

Nonetheless, Wealthy’s equation 2ʀ doesn’t go to W(t₁) within the limiting case i = t = 1.

As an alternative 2ʀ turns into W(t₁) = (1-a)W(Zero)+(1-a)[R₁(0)+R₂(0) -rR₃(0)], which isn’t right.

The R-factors ought to have their t₁ values, however don’t. There aren’t any Rn(Zero)’s as a result of W(Zero) is an preliminary worth that has no perturbations. Additionally, coefficient (1-a) shouldn’t multiply the Rn’s (take a look at eqn. 1ʀ).

So, equation 2ʀ is improper. The 1ʀ®2ʀ transition is mathematically incoherent.

There’s an extra conundrum. Wealthy’s derivation, and mine, assume that coefficient ‘a’ is fixed. If ‘a’ is fixed, then (1-a) turns into raised to the ability of the summation e.g., (1-a)ᵗW(Zero).

However there isn’t a cause to suppose that coefficient ‘a’ needs to be a continuing throughout a time-varying system. Why ought to each new W(t-1) have a continuing fractional affect on W(t)?

Why ought to ‘a’ be fixed? Aside from comfort.

Wealthy then outlined E[] = expectation worth and V[] = variance = (customary deviation)², and assigned that:

E[R₁(t)] = bt+c

E[R₂(t)] = d

E[R₃(t)] = Zero.

Following this, Wealthy allowed (leaving the derivation to the scholar) that, “Then a modicum of algebra derives

“E[W(t)] = b(at + a-1 + (1-a)t+1)/a2 + (c+d)(1 – (1-a)t)/a + (1-a)W(Zero)” (3ʀ)

Evidently 3ʀ was obtained by manipulating 2ʀ (can we see the work, please?). However as 2ʀ is wrong, nothing worthwhile is discovered. We’re informed that eqn. 3ʀ ® 4ʀ as coefficient ‘a’ ® Zero.

E[W(t)] = bt(t+1)/2 + (c+d)t + W(Zero) (4ʀ)

A Second Diversion into Dimensional Evaluation:

Wealthy assigned E[R₁(t)] = bt+c. Up by way of eqn. 2ʀ, ‘t’ was integer time. In E[R₁(t)] it has turn out to be a coefficient. We all know from eqn. 1ʀ that R₁(t) will need to have the equivalent dimensional unit carried by W(t), which is, e.g., Celsius.

We additionally know R₁(t) is in Wm⁻², however W(t) is in Celsius (C). Issue “bt” have to be in the identical Celsius items as [W(t)]. Is the dimension of b, then, Celsius/time? How does that work? The dimension of ‘c’ should even be Celsius. What’s the rationale of those assignments?

The assigned E[R₁(t)] = bt+c has the system of an ascending straight line of intercept c, slope b, and time the abscissa.

How handy it’s, to imagine a linear conduct for the black field M(t) and to assign that linearity earlier than ever (supposedly) contemplating the suitable type of a GCM air temperature emulator. What rationale decided that handy type? Aside from opportunism?

The definition of R₁(t) was, “…the part which represents adjustments in main causal influences, such because the solar and carbon dioxide.”

So, a straight line now represents the key causal affect of the solar or of CO2. How was that determined?

Subsequent, multiplying by way of time period 1 in 4ʀ, we get bt(t+1)/2 = (bt²+bt)/2. How do each bt² and bt have the items of Celsius required by E[R₁(t)] and W(Zero)?

Issue ‘t’ is in items of time. The interior dimensions of bt(t+1)/2 are incommensurate. The parenthetical sum is bodily meaningless.

Persevering with:

Wealthy’s ultimate equation for the full variance of his emulator,

Var[W(t)] = (s12+s22+s32-2r s2 s3)(1 – (1-a)2t)/(2a-a2) (5ʀ)

included all of the Rn(t) phrases and the assumed covariance of his R₂(t) and R₃(t).

Evaluate his emulator 4ʀ with the GCM air temperature projection emulator in my paper:

![]()

![]() (2)

(2)

In distinction to Wealthy’s emulator, eqn. 2 has no offsetting covariances. Not solely that, all of the DT-determining coefficients in eqn. 2 besides fCO₂ are givens. They haven’t any uncertainty variance in any respect.

Briefly, each Wealthy’s emulator itself and its dependent variances are completely irrelevant to any analysis of the GCM projection emulator (eqn. 2). Completely irrelevant, even when they have been appropriately derived, which they weren’t.

Parenthetical abstract feedback on Wealthy’s “Abstract of part B:”

“A very good emulator can mimic the output of the black field.“

(Trivially true.)

“A reasonably basic iterative emulator mannequin (1) is introduced.”

(By no means as soon as used to truly emulate something, and of no centered relevance to GCM air temperature projections.)

“Formulae are given for expectation and variance of the emulator as a perform of time t and numerous parameters.“

(An emulator that’s critically vacant and mathematically incoherent, and with an inapposite variance.)

“The two further parameters, a, and R3(t), over and above these of Pat Frank’s emulator, could make an enormous distinction to the evolution.”

(Further parameters in an emulator that doesn’t deploy the formal construction of the GCM emulator, and lacking any analytically equal elements. The additional parameters are advert hoc, whereas ‘a’ is incorrectly laid out in 3ʀ and 4ʀ. The emulator is critically irrelevant and its growth in ‘a’ is improper.)

“The “magic” part R3(t) with anti-correlation -r to R2(t) can significantly cut back mannequin error variance while retaining linear development within the absence of decay.“

(Element R₃(t) is likewise advert hoc. It has no justified rationale. That R₃(t) has a variance in any respect requires its rejection (likewise rejection of R₁(t) and R₂(t)) as a result of the coefficients within the emulator within the paper (eqn. 2 above) haven’t any related uncertainties.)

“Any decay fee a>Zero utterly adjustments the propagation of error variance from linear development to convergence to a finite restrict.“

(The conduct of a critically irrelevant emulator engenders a deserved, ‘so what?’

Additional, a>Zero causes basic decay solely by permitting the mistaken derivation that put the (1-a) coefficient into the Rn(t) elements in 2ʀ)

Part I conclusion: The emulator building itself is incongruous. It contains an unwarranted persistence. It has phrases of comfort that don’t map onto the goal GCM projection emulator. The -rR₃(t) time period can’t behave as described.

The transition from eqn. 2ʀ to eqn. 3ʀ is mathematically incoherent. The derivations following that make use of eqn. 3ʀ are due to this fact improper, together with the variances.

The eqn. 1ʀ emulator itself is advert hoc. Its derivation is regardless of the conduct of local weather fashions and of bodily reasoning. Its skill to emulate a GCM air temperature projection is undemonstrated.

II. Issues with “New Parameters” Part C:

Wealthy rationalized his introduction of so-called decay parameter ‘a’ within the “Parameter” part C of his put up. He launched this equation:

M(t) = b + cF(t) +dH(t-1), 6ʀ

the place M = temperature, F = forcing, and H(t) is “warmth content material“.

The ‘b’ time period could be the ‘b’ coefficient assigned to E(R₁(t)] above, however we aren’t informed something about it.

I’ll summarize the issue. Coefficients ‘c’ and ‘d’ are literally features that remodel forcing and warmth flux (not warmth content material) in Wm⁻², into their respectively precipitated temperature, Celsius. They don’t seem to be integers or actual numbers.

Nonetheless, Wealthy’s derivation treats them as actual quantity coefficients. It is a deadly downside.

For instance, in equation 6ʀ above, perform ‘d’ transforms warmth flux H(t-1) into its consequent temperature, Celsius. Nonetheless, the ultimate equation of Wealthy’s algebraic manipulation ends with ‘d’ inappropriately working on M(Zero), the preliminary temperature. Thus, he wrote:

“M(t) = b + cF(t) + d(H(Zero) + e(M(t-1)-M(Zero)) = f + cF(t) + (1-a)M(t-1) (7ʀ)

the place a = 1-de, f = b+dH(Zero)-deM(Zero). (my daring)”

There is no such thing as a bodily justification for a “deM(Zero)” time period; d can’t function on M(Zero).

Wealthy additionally assigned “a = 1-de,” the place ‘e’ is an integer fraction, however once more, ‘d’ is an operator perform; ‘d’ can’t function on ‘e’. The ultimate (1-a)M(t-1) time period is a cryptic model of deM((t-1), which accommodates the identical deadly assault on bodily which means. Perform ‘d’ can’t function on temperature M.

Additional, what’s the which means of an operator perform standing alone with nothing on which to function? How can “1-de” be stated to have a discrete worth, and even to imply something in any respect?

Different conceptual issues are in proof. We learn, “Now by the Stefan-Boltzmann equation M [temperature – P] needs to be associated to F^¼ …” Relatively, S-B says that M needs to be associated to H^¼ (H is right here taken to be black physique radiant flux). In response to local weather fashions M is linearly associated to F.

We’re additionally informed, “Subsequent, the warmth adjustments by an quantity depending on the change in temperature: …” whereas as a substitute, physics says the alternative: temperature adjustments by an quantity depending on the change within the warmth (kinetic power). That’s, temperature relies on atomic/molecular kinetic power.

Wealthy completed with, “Roy Spencer, who has severe scientific credentials, had written “CMIP5 fashions do NOT have vital world power imbalances inflicting spurious temperature tendencies as a result of any mannequin systematic biases in (say) clouds are cancelled out by different mannequin biases”. .”

Roy’s remark was initially a part of his tried disproof of my uncertainty evaluation. It utterly missed the purpose, partially as a result of it confused bodily error with uncertainty.

Roy’s and Wealthy’s offsetting errors do nothing to take away uncertainty from the prediction of a bodily mannequin.

Wealthy went on, “Which means with the intention to preserve approximate Prime Of Ambiance (TOA) radiative stability, some approximate cancellation is compelled, which is equal to there being an R3(t) with excessive anti-correlation to R2(t). The scientific implications of this are mentioned additional in Part I.”

The one, repeat solely, scientific implication of offsetting errors is that they reveal areas requiring additional analysis, that the speculation is insufficient, and that the predictive capability is poor.

Wealthy’s approving point out of Roy’s mistake evidences that Wealthy, too, apparently doesn’t see the excellence between bodily error and predictive uncertainty. Tim Gorman particularly, and others, have repeatedly identified the excellence to Wealthy, e.g., right here, right here, right here, right here, and right here, however to no apparent avail.

Conclusions concerning the Parameter part C: analytically unimaginable, bodily disjointed, wrongly supposes offsetting errors improve predictive reliability, wrongly conflates bodily error with predictive uncertainty.

And as soon as once more, no demonstration that the proposed emulator can emulate something related.

III. Issues with “Emulator Parameters” Part D:

In Part I above, I promised to indicate that Wealthy’s emulator would stay irrelevant, even after he added my numbers to it.

In his “Emulator Parameters” part Wealthy began out with, “Dr. Pat Frank’s emulator falls inside the basic mannequin above.” This view couldn’t presumably be extra improper.

First, Wealthy composed his emulator with my GCM air temperature projection emulator in thoughts. He inverted significance to say the originating formalism falls inside the restrict of a by-product composition.

Once more, the GCM projection emulator is:

![]()

![]() (2 once more)

(2 once more)

Wealthy’s emulator is W(t) = (1-a)W(t-1) + R₁(t) + R₂(t) + (-r)R₃(t) (1ʀ once more)

(In II above, I confirmed that his various, M(t) = f + cF(t) + (1-a)M(t-1), is incoherent and due to this fact not value contemplating additional.)

In Wealthy’s emulator, temperature T₂ has some persistence from T₁. This dependence is nowhere within the GCM projection emulator.

Additional, within the GCM emulator (eqn. 2-again), the temperature of time t-1 makes no look in any respect within the emulated air temperature at time t. Wealthy’s 1ʀ emulator is constitutionally distinct from the GCM projection emulator. Equating them is to make a class mistake.

Analyzing additional, emulator R₁(t) is a, “part which represents adjustments in main causal influences, such because the solar and carbon dioxide,”

Wealthy’s R₁(t) describes all of, ![]()

![]() within the GCM projection emulator.

within the GCM projection emulator.

Wealthy’s R₁(t) thus exhausts all the GCM projection emulator. What then is the aim of his R₂(t) and R₃(t)? They haven’t any analogy within the GCM projection emulator. They haven’t any position to switch into which means.

The R2(t) is “a robust contribution with observably excessive variance, for instance the Longwave Cloud Forcing (LCF).” The GCM projection emulator has no such time period.

The R₃(t) is, “a putative part which is negatively correlated with R2(t)…” The GCM projection emulator has no such time period. R₃(t) has no position to play in any analytical analogy.

Somebody may insist that Wealthy’s emulator is just like the GCM projection emulator after his (1-a)W(t-1), R₂(t), and (-r)R₃(t) phrases are thrown out.

So, we’re left with this deep generalization: Wealthy’s emulator-emulator pared to its analogical necessities is M(tᵢ) = R(tᵢ),

the place R(tᵢ) =![]()

![]() .

.

Wealthy went on to specify the parameters of his emulator: “The constants from [Pat Frank’s] paper, 33Ok, Zero.42, 33.three Wm-2, and +/-Four Wm-2, the latter being from errors in LCF, mix to present 33*Zero.42/33.three = Zero.416 and Zero.416*Four = 1.664 used right here.”

Does anybody see a ±Four Wm⁻² within the GCM projection emulator? There is no such thing as a such time period.

Wealthy has made the identical mistake as did Roy Spencer (one in every of many). He supposed that the uncertainty propagator (the right-side time period in paper eqn. 5.2) is the GCM projection emulator.

It isn’t.

Wealthy then introduced the conversion of his basic emulator into his view of the GCM projection emulator: “So we will select a = Zero, b = Zero, c+d = Zero.416 F(t) the place F(t) is the brand new GHG forcing (Wm-2) in interval t, s1=Zero, s2=1.664, s3=Zero.“, after which derive

W(t) = (c+d)t + W(Zero) +/- sqrt(t) s2” (8ʀ)

There are Wealthy’s errors made specific: his emulator, eqn. 8ʀ, contains persistence within the W(Zero) time period and a ±sqrt(t) s2 time period, neither of which seem anyplace within the GCM projection emulator. How can eqn. 8ʀ presumably be an analogy for eqn. 2?

Additional, together with “ +/- sqrt(t) s2“will trigger his emulator to supply two values of W(t) at each time-step.

One worth W(t) stems from the optimistic root of sqrt(s2) and the opposite W(t) from the unfavourable root of sqrt(s2). A plot of the outcomes will present two W(t) tendencies, one maybe rising whereas the opposite falls.

To see this error in motion, see the primary Determine in Roy Spencer’s critique.

The “+/-” time period in Wealthy’s emulator makes it not an emulator.

Nonetheless, a ‘±’ time period does seem within the error propagator:

±uᵢ(T) = [fCO₂ ´ 33 K ´ (±4 Wm⁻²)/F₀] — see eqns. 5.1 and 5.2 within the paper.

It ought to now be is apparent that Wealthy’s emulator is nothing just like the GCM projection emulator.

As an alternative, it represents a class mistake. It’s not solely wrongly derived, it has no analytical relevance in any respect. It’s conceptually opposed to the GCM projection emulator it was composed to critically appraise.

Wealthy’s emulator is advert hoc. It was constructed with elements he deemed appropriate, however with no empirical reference. Idea with out empiricism is philosophy at finest; by no means science.

Wealthy then added in sure values taken from the GCM projection emulator and proceeded to zero out every part else in his equation. The end result doesn’t display equivalence. It demonstrates tendentiousness: parts manipulated to attain a predetermined finish. This method is diametrical to precise science.

The remainder of the Emulator Parameters part elaborates speculative constructs of supposed variances given Wealthy’s irrelevant emulator. For instance, “Now if we select b = a(c+d) then that turns into (c+d)(t+1), and so forth. and so forth.” That is to decide on with none reference to any specific system or any recognized bodily GCM error. The b = a(c+d) is an ungrounded levitated time period. It has no substantive foundation.

The remainder of the variance hypothesis is equally irrelevant, and in any case derives from an unreservedly improper emulator.

Nowhere is its competence demonstrated by, e.g., emulating a GCM air temperature projection.

I can’t think about his Part D additional, besides to notice that Wealthy’s Case 1 and Case 2 clearly suggest that he considers the variation of mannequin runs in regards to the mannequin projection imply to be the centrally germane measure of uncertainty.

It’s not.

The precision/accuracy distinction was mentioned within the introductory feedback above. Run variation provides info solely about mannequin precision — run repeatability. The evaluation within the paper involved accuracy.

This distinction is totally central, and was of instant focus.

Introduction paragraph 2:

“Printed GCM projections of the GASAT sometimes current uncertainties as mannequin variability relative to an ensemble imply (Stainforth et al., 2005; Smith et al., 2007; Knutti et al., 2008), or as the end result of parameter sensitivity checks (Mu et al., 2004; Murphy et al., 2004), or as Taylor diagrams exhibiting the unfold of mannequin realizations round observations (Covey et al., 2003; Gleckler et al., 2008; Jiang et al., 2012). The previous two are measures of precision, whereas observation-based errors point out bodily accuracy. Precision is outlined as settlement inside or between mannequin simulations, whereas accuracy is settlement between fashions and exterior observables (Eisenhart, 1963, 1968; ISO/IEC, 2008). (daring added)

…

“Nonetheless, projections of future air temperatures are invariably printed with out together with any bodily legitimate error bars to signify uncertainty. As an alternative, the usual uncertainties derive from variability a few mannequin imply, which is simply a measure of precision. Precision alone doesn’t point out accuracy, neither is it a measure of bodily or predictive reliability. (added daring)

“The lacking reliability evaluation of GCM world air temperature projections is rectified herein.”

It’s evidently potential to learn the above and fail to know it. Wealthy’s complete method to error and variance ignores it and thereby is misguided. He has repeatedly confused mannequin precision with predictive accuracy.

That mistake is deadly to crucial relevance. It removes any legitimate software of Wealthy’s critique to my work or to the GCM projection emulator.

Lastly, I’ll touch upon his final paragraph: “Pat Frank’s paper successfully makes use of a selected W(t;u) (see Equation (Eight) above) which has fitted mw(t;u) to mm(t), however ignores the variance comparability. That’s, s2 in (Eight) was chosen from an error time period from LCF with out regard to the precise variance of the black field output M(t).”

The primary sentence says that I fitted “mw(t;u) to mm(t).” That’s, Wealthy supposed that my evaluation consisted of matches to the mannequin imply.

He’s improper. The evaluation centered on single projection runs of particular person fashions.

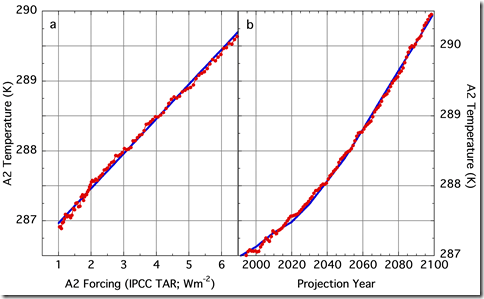

Methodological SI Determine S3-2 exhibits every match examined a single temperature projection run of a single goal GCM plotted in opposition to a regular of GHG forcing (SRES, Meinshausen, or different).

SI Determine S3-2. Left: match of cccma_cgcm3_1_t63 projected world common temperature plotted vs SRES A2 forcing. Proper: emulation of the ccma_cgcm3_1_t63 A2 air temperature projection. Each match had just one vital diploma of freedom.

Solely Determine 7 confirmed emulation of a multi-model projection imply. All of the 68 remainder of them have been single mannequin projection runs. All of which Wealthy apparently missed.

There is no such thing as a ambiguity in what I did, which isn’t what Wealthy supposed I did.

The second sentence, “That’s, s2 in (Eight) was chosen from an error time period from LCF with out regard to the precise variance of the black field output M(t).” can be factually improper. Twice.

First, there isn’t a LCF time period within the emulator, nor any customary deviation. The “s2” is a fantasy.

Second, the lengthy wave cloud forcing calibration error within the uncertainty propagator is the annual common error CMIP5 GCMs make in simulating annual world cloud fraction (CF).

That’s, LWCF calibration error is precisely the precise [error] variance of the black field output M(t) with respect to noticed world cloud fraction.

Wealthy’s “the precise variance of the black field output M(t).” refers back to the variance of particular person GCM air temperature projection runs round a projection imply; a precision metric.

The accuracy metric of mannequin variance with respect to remark is evidently misplaced on Wealthy. He introduced up inattention to reveal precision as if it faulted an evaluation involved with accuracy.

This deadly mistake is a commonplace among the many critics of my paper.

It exhibits a foundational lack of ability to effectuate any scientifically legitimate criticism in any respect.

The reason for coming into the LWCF error statistic into the uncertainty propagator is given inside the paper (p. 10):

“GHG forcing enters into and turns into a part of the worldwide tropospheric thermal flux. Due to this fact, any uncertainty in simulated world tropospheric thermal flux, comparable to LWCF error, should situation the decision restrict of any simulated thermal impact arising from adjustments in GHG forcing, together with world air temperature. LWCF calibration error can thus be mixed with 1Fi in equation 1 to estimate the influence of the uncertainty in tropospheric thermal power flux on the reliability of projected world air temperatures.”

This rationalization appears opaque to many for causes that stay obscure.

Citations Zhang et al. (2005) and Dolinar et al. (2015) gave comparable estimates of LWCF calibration error.

Abstract conclusions in regards to the Emulator Parameter Part D:

1) The proposed emulator is advert hoc and tendentious.

2) The proposed emulator is constitutively improper.

· It wrongly contains persistence.

· It wrongly features a cloud forcing time period (or the like).

· It wrongly contains an uncertainty statistic.

three) Confused the uncertainty propagator with the GCM projection emulator.

Four) Mistaken focus of precision in a research about accuracy.

Or, maybe, ignorance of the idea of bodily accuracy, itself.

5) Wrongly imputed that the research centered on GCM projection means.

6) By no means as soon as demonstrated that the emulator can truly emulate.

IV. Issues with “Error and Uncertainty” Part E:

To this point, we’ve discovered that Wealthy’s emulator evaluation is advert hoc, tendentious, constitutively improper, dimensionally unimaginable, mathematically incoherent, confuses precision with accuracy, contains incongruous variances, and is empirically unvalidated. His evaluation might virtually not be extra bolloxed up.

I right here step by way of a couple of of his Part E errors, which at all times appear to simplify issues for him. Quotes are marked “R:” adopted by a remark.

R: “Assuming that X is a single fastened worth, then previous to measurement, M-X is a random variable representing the error,…”

Besides when the error is systematic stemming from uncontrolled variables. In that case M – X is a deterministic variable of no fastened imply, of a non-normal dispersion, and of an unknowable worth. See the additional evaluation within the Appendix.

R: “+/-sm is described by the JCGM 2.three.1 because the “customary” uncertainty parameter.”

Wealthy is being a bit quick right here. He’s implying the JCGM Part 2.three.1 definition of “customary uncertainty” is restricted to the SD of random errors.

The JCGM is the Analysis of measurement information — Information to the expression of uncertainty in measurement — the usual information to the statistical evaluation of measurements and their errors offered by the Bureau Worldwide des Poids et Mesures.

The JCGM truly says that the usual uncertainty is “uncertainty of the results of a measurement expressed as a regular deviation,” which is quite extra basic than Wealthy allowed.

The quotes under present that the JCGM contains systematic error as contributing to uncertainty.

Underneath E.three “Justification for treating all uncertainty parts identically” the JCGM says,

The main focus of the dialogue of this subclause is an easy instance that illustrates how this Information treats uncertainty parts arising from random results and from corrections for systematic results in precisely the identical means within the analysis of the uncertainty of the results of a measurement. It thus exemplifies the perspective adopted on this Information and cited in E.1.1, particularly, that every one parts of uncertainty are of the identical nature and are to be handled identically. (my daring)

Underneath JCGM E. three.1 and E 5.2, we have now that the variance of a measurement wᵢ of true worth μᵢ is given by σᵢ² =E[(wᵢ – μᵢ)²], which is the usual expression for error variance.

After the same old caveats about [the] expectation of the likelihood distribution of every εi is assumed to be zero, E(εi) = Zero, …, the JCGM notes that,

It’s assumed that likelihood is seen as a measure of the diploma of perception that an occasion will happen, implying systematic error could also be handled in the identical means as a random error and that εᵢ represents both variety.(my daring).

In different phrases, the JCGM advises that systematic error is to be handled utilizing the identical statistical formalism as is used for random error.

R: “The actual error statistic of curiosity is E[(M-X)2] = E[((M-mm)+(mm-X))2] = Var[M] + b2, masking each a precision part and an accuracy part.”

Wealthy then referenced that equation to my paper and to lengthy wave cloud forcing (LWCF;

Wealthy’s LCF) error. Nonetheless, it is a elementary mistake.

In Wealthy’s equation above, the bias, b = M-mm = a continuing. Amongst GCMs nonetheless, step-wise cloud bias error varies throughout the worldwide grid-points for every GCM simulation. And it additionally varies amongst GCMs themselves. See paper Determine Four and SI Determine S6-1.

The issue (M-mm) = b, above, ought to due to this fact be (Mᵢ-mm) = bᵢ as a result of b varies in a deterministic however unknown means with each Mᵢ.

An accurate evaluation of the case is:

E[(Mᵢ-X)2] = E[((Mᵢ-mm)+(mm-X))2] = Var[M] + Var[b]

Systematic error is mentioned in additional element within the Appendix.

Wealthy goes on, “However the concept of changing variances and covariances of enter parameter errors into output error by way of differentiation is nicely established, and is given in Equation (13) of the JCGM.”

Equation (13) of the JCGM offers the system for the error variance in y, u²c(y), however describes it this fashion:

The mixed variance, u²c(y), can due to this fact be seen as a sum of phrases, every of which represents the estimated variance related to the output estimate y generated by the estimated variance related to every enter estimate xᵢ. (my daring)

That’s, the mixed variance, u²c(y), is the variance that outcomes from contemplating all types of error; not simply random error.

Underneath JCGM three.three.6:

The usual uncertainty of the results of a measurement, when that result’s obtained from the values of numerous different portions, is termed mixed customary uncertainty and denoted by uc. It’s the estimated customary deviation related to the end result and is the same as the optimistic sq. root of the mixed variance obtained from all variance and covariance (C.three.Four) parts, nonetheless evaluated, utilizing what’s termed on this Information the regulation of propagation of uncertainty (see Clause 5). (my daring)

Underneath JCGM E Four.Four EXAMPLE:

The systematic impact resulting from not with the ability to deal with these phrases precisely results in an unknown fastened offset that can not be experimentally sampled by repetitions of the process. Thus, the uncertainty related to the impact can’t be evaluated and included within the uncertainty of the ultimate measurement end result if a frequency-based interpretation of likelihood is strictly adopted. Nonetheless, deciphering likelihood on the premise of diploma of perception permits the uncertainty characterizing the [systematic] impact to be evaluated from an a priori likelihood distribution (derived from the out there information in regards to the inexactly recognized phrases) and to be included within the calculation of the mixed customary uncertainty of the measurement end result like every other uncertainty. (my daring)

JCGM says that the mixed variance, u²c(y), contains systematic error.

The systematic error stemming from uncontrolled variables turns into a variable part of the output; a part that will change unknowably with each measurement. Systematic error then essentially has an unknown and virtually actually non-normal dispersion (see the Appendix).

The JCGM additional stipulates that systematic error is to be handled utilizing the identical mathematical formalism as random error.

Above we noticed that uncontrolled deterministic variables produce a dispersion of systematic error biases in an prolonged collection of measurements and in GCM simulations of world cloud fraction.

That’s, the systematic error is a “fastened offset” = bᵢ solely within the Mᵢ time-step. However the bᵢ differ in some unknown means throughout the n-fold collection of Mᵢ.

In mild of the JCGM dialogue, the dispersion of systematic error, bᵢ, requires that any full error variance embody Var[b].

The dispersion of the bᵢ might be decided solely by means of a calibration experiment in opposition to a recognized X carried out below situations as equivalent as potential to the experiment.

The empirical methodological calibration error, Var[b] of X, is then utilized to situation the results of each experimental willpower or remark of an unknown X; i.e., it enters the reliability assertion of the end result.

In Instance 1, the 1-foot ruler, Wealthy instantly assumed away the issue. Thus, “the producer assures us that any error in that interval is equally probably[, but I will] write 12+/-_0.1 …, the place the _ denotes a uniform likelihood distribution, as a substitute of a single customary deviation for +/-.”

That’s, quite than settle for the producer’s stipulation that every one deviations are equally probably, Wealthy transformed the uncertainty right into a random dispersion, during which all deviations are not equally probably. He has assumed information the place there’s none.

He wrote, “If I’ve only one ruler, it’s exhausting to see how I can do higher than get a desk which is 120+/-_1.Zero”.” However that’s improper.

The unknown error in any ruler is an oblong distribution of -Zero.1 to +Zero.1″, with all potentialities equally probably. Ten measurements with a ruler of unknown particular error might be anyplace from 1″ to -1″ in error. The expectation interval is (1-(-1)”/2 =1″. The usual uncertainty is then 1″/sqrt(three) = ±Zero.58″, thus 120±Zero.58″.

He then wrote that if one as a substitute made ten measurements utilizing ten independently machined rulers then the uncertainty of measurement = “sqrt(10) instances the uncertainty of every.” However once more, that’s improper.

The unique stipulation is equal chance throughout ±Zero.1″ of error for each ruler. For ten independently machined rulers, each ruler has a size deviation equally more likely to be anyplace inside -Zero.1″ to Zero.1″. Which means the true whole error utilizing 10 unbiased rulers can once more be anyplace from 1″ to -1″.

The expectation interval is once more (1-(-1)”/2 = 1″, and the usual uncertainty after utilizing ten rulers is 1″/sqrt(three) = ±Zero.58″. There is no such thing as a benefit, and no lack of uncertainty in any respect, in utilizing ten unbiased rulers quite than one. That is the end result when information is missing, and one has solely an oblong uncertainty estimate — a not unusual circumstance within the bodily sciences.

Wealthy’s mistake is based in his instant recourse to pseudo-knowledge.

R; “We all know by symmetry that the shortest plus longest [of a group of ten rulers] has a imply error of Zero…” However we have no idea that as a result of each ruler is independently machined. Each size error is equally probably. There is no such thing as a cause to imagine a standard distribution of lengths, regardless of what number of rulers one has. The shortest could also be -Zero.02″ too brief, and the longest Zero.08″ too lengthy. Then ten measurements produce a internet error of Zero.three″. How would anybody know? One has no means of realizing the true error within the bodily size of a shortest and a longest ruler.

The size uncertainty of anybody ruler is [(0.1-(-0.1)/2]/sqrt(three) = ±Zero.058″. The one affordable stipulation one may make is that the shortest ruler is (Zero.05±Zero.058)” too brief and the longest (Zero.05±Zero.058)” too lengthy. Then 5 measurements utilizing every ruler yields a measurement with uncertainty of ±Zero.18″.

Complicated variance estimates however, Wealthy assumed away all the problem in the issue, wished his means again to random error, and loved a contented dance.

Conclusion: Wealthy’s Part E is improper, wherever it isn’t irrelevant.

1. He assumed random error when he ought to have thought-about deterministic error.

2. He badly misconstrued the message of JCGM regarding systematic error, and the which means of its equation (13).

three. He ignored the centrally vital situation of uncontrolled variables, and the ensuing unknowable variation of systematic error throughout the information.

Four. He wrongly handled systematic error as a continuing offset.

5. His remedy of rectangular uncertainty is improper.

6. He then wished rectangular uncertainty right into a random distribution.

7. He handled assumed distributions as if they have been recognized distributions — OK in a paper on statistical conjectures, a failing grade in an undergraduate instrumental lab course, and demise in a real-world lab.

V. Issues with Part F:

The primary half regarding comparative uncertainty is speculative statistics and so is right here ignored.

Issues with Wealthy’s Marked Ruler Instance 2:

The dialogue uncared for the decision of the ruler itself, sometimes 1/Four of the smallest division.

It additionally ignored the query of whether or not the lined division marks are uniformly and precisely spaced — one other a part of the decision downside. This latter downside might be decreased with recourse to a high-precision ruler that features a producer’s decision assertion offered by the in-house engineers.

It ignored that the smallest divisions on a to-be-visually-appraised precision instrument are sometimes manufactured in mild of the human skill to resolve the areas.

To attain actual accuracy with a ruler, one must calibrate it at a number of inside intervals utilizing a set of high-accuracy size requirements. Good luck with that.

VI. Issues with Wealthy’s thermometer Instance three Part G:

Wealthy introduced up what was apparently my dialogue of thermometer metrology, made in an earlier touch upon one other WUWT essay.

He talked about a few of the parts I listed as going into uncertainty within the read-off temperature together with, “the thermometer capillary is just not of uniform width, the interior floor of the glass is just not completely easy and uniform, the liquid inside is just not of fixed purity, all the thermometer physique is just not at fixed temperature. He didn’t embody the truth that throughout calibration human error in studying the instrument might have been launched.”

I not know the place I made these feedback (I searched however didn’t discover them) and Wealthy offered no hyperlink. Nonetheless, I’d by no means have supposed that checklist to be exhaustive. Anybody questioning about thermometer accuracy can do rather a lot worse than to learn Anthony Watts’ put up about thermometer metrology.

Amongst impacts on accuracy, Anthony talked about hardening and shrinking of the glass in LiG thermometers over time. After 10 years, he stated, the studying could be Zero.7 C excessive. A means of sluggish hardening would impose a false warming pattern over all the decade. Anthony additionally talked about that historic LiG meteorology thermometers have been typically graduated in 2 ⁰F increments, yielding a decision of ±Zero.5 ⁰F = ±Zero.three ⁰C.

Wealthy talked about none of that, in correcting my apparently incomplete checklist.

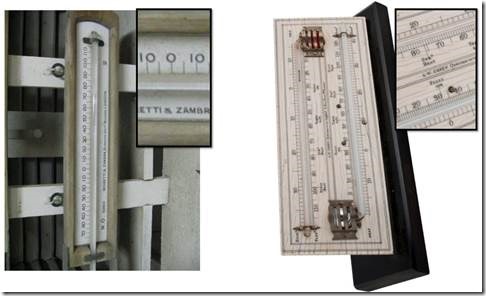

Right here’s an instance of a 19th century min-max thermometer with 2 ⁰F divisions.

Louis Cassella-type 19th century min-max thermometer with 2 ⁰F divisions.

Picture from the Yale Peabody Museum.

Excessive-precision Louis Castella thermometers included 1 ⁰F divisions.

Wealthy continued: “The fascinating query arises as to what the (hypothetical) producers meant after they stated the decision was +/-Zero.25Ok. Did they really imply a 1-sigma, or maybe a 2-sigma, interval? For deciding learn, report, and use the information from the instrument, that info is quite very important.”

Simply so everybody is aware of what Wealthy is speaking about, pictured under are a few historic LiG meteorological thermometers.

Left: The 19th century Negretti and Zambara minimal thermometer from the Welland climate station in Ontario, Canada, mounted within the unique Stevenson Display screen. Proper: A C.W Dixey 19th century Max-Min thermometer (London, after ca. 1870). Insets are close-ups.

The best lineations within the pictured thermometers are 1 ⁰F and are maybe 1 mm aside. The Welland instrument served about 1892 – 1957.

The decision of those thermometers is ±Zero.25 ⁰F, which means that smaller values to the precise of the decimal are bodily doubtful. The 1880-82 observer at Welland, Mr. William B. Raymond, age about 20 years, apparently recorded temperatures to ±Zero.1 ⁰F, a fantastic instance of false precision.

In asking, “Did [the manufacturers] truly imply a 1-sigma, or maybe a 2-sigma, interval?“, Wealthy is posing the improper query. Decision is just not about error. It doesn’t suggest a statistical variable. It’s a bodily restrict of the instrument, under which no dependable information are obtainable.

The fashionable Novalynx 210-4420 Collection max-min thermometer under is, “made to U.S. Nationwide Climate Service specs.”

The specification sheet (pdf) of offers an accuracy of ±Zero.2 ⁰C “above Zero ⁰C.” That’s a resolution-limit quantity, not a 1σ quantity or a 2σ quantity.

A ±Zero.2 ⁰C decision restrict means the thermometers aren’t capable of reliably distinguish between exterior temperatures differing by Zero.2 ⁰C or much less. It means any finer studying is bodily suspect.

The Novalynx thermometers report 95 levels throughout Eight inches, so that every diploma traverses Zero.084″ (2.1 mm). Studying a temperature to ±Zero.2 ⁰C requires the visible acuity to discriminate amongst 5 Zero.Zero17″ = Zero.43 mm unmarked widths inside every diploma interval.

Historic thermometers have been no higher.

This results in the query: — despite the fact that the thermometer is correct to ±Zero.2 ⁰C, is it nonetheless affordable to suggest, as Wealthy did, that an observer ought to be capable to commonly discriminate particular person ±Zero.1 ⁰C intervals inside merging Zero.22 mm clean widths? Trace: hardly.

Wealthy’s complete dialogue is unrealistic, exhibiting no sensitivity to the which means of decision limits, of accuracy, of the commencement of thermometers, or of restricted observer acuity.

He wrote, “Within the current [weather thermometer] instance, I’d advocate attempting for t2 = 1/100, or as close to as might be achieved inside cause.” Wealthy’s t² is the variance of observer error, which means he recommends studying to ±Zero.1 ⁰C in thermometers that aren’t correct to raised than ±Zero.2 ⁰C.

Wealthy completed by advising the manufacture of false information: “if the observer has the ability and time and inclination then she will be able to cut back general uncertainty by studying to a larger precision than the reference worth. (my daring)”

Wealthy really useful false precision; a mistake undergraduate science and engineering college students have flogged out of them from the very first day. However one which typifies consensus climatology.

His conclusion that, “Once more, actual life examples recommend the compounding of errors, resulting in roughly regular distributions.” is fully unfounded, based mostly because it absolutely is on unrealistic statistical speculations. Wealthy thought-about no real-life examples in any respect.

The ethical of Wealthy’s part G is that it’s not prudent to present recommendation regarding strategies about which one has no expertise.

The entire thermometer part G is misguided and is yet one more instance, after the a number of prior, of an apparently very poor grasp of bodily accuracy, of its which means, and of its elementary significance to all of science.

VII. Issues with “The implications for Pat Frank’s paper” Part H:

Wealthy started his Part H with a set of declarations in regards to the implications of his numerous

Sections now recognized to be over-wrought or plain improper. Stepping by way of:

Part B: Wealthy’s emulator is constitutively inapt. The derivation is each improper and incoherent. Tendentiously superfluous phrases promote a predetermined finish. The evaluation is dimensionally unsound and deploys unjustified assumptions. No empirical validation of claimed emulator competence.

Part C: incorrectly proposes that offsetting calibration errors promote predictive reliability. It contains an inverted Stefan-Boltzmann equation and improperly treats operators as coefficients. As in different sections, Part B evinces no understanding of accuracy.

Part D: shows confusion about precision and accuracy all through. The GCM emulator (paper eqn. 1) is confused with the error propagator (paper eqn. 5.2) which is deadly to Part D. No empirical validation of claimed emulator competence. Fatally misconstrues the analytical focus of my paper to be GCM projection means.

Part E: once more, falsely asserted that every one measurement or mannequin error is random and that systematic error is a hard and fast fixed bias offset. It makes empirically unjustified and advert hoc assumptions about error normality. It self-advantageously misconstrued the JCGM description of normal uncertainty variance.

Part F: has unrealistic prescriptions about the usage of rulers.

Part G: shows no understanding of precise thermometers and advises observers to report temperatures to false precision.

Wealthy wrote that, “The implication of Part C is that many emulators of GCM outputs are potential, and simply because a selected one appears to suit imply values fairly nicely doesn’t imply that the character of its error propagation is right.”

There we see once more Wealthy’s deadly mistake that the paper is critically centered on imply values. He additionally wrote there are lots of potential GCM emulators with out ever demonstrating that his proposed emulator can truly emulate something.

And once more right here, “Frank’s emulator does visibly give a good match to the annual technique of its goal,…”

Nonetheless, the evaluation didn’t match annual means. It match the connection between forcing and projected air temperature.

The emulator itself reproduced the GCM air temperature projections. It didn’t match them. Contra Wealthy, that efficiency is certainly, “adequate proof to claim that it’s a good emulator.”

And in additional truth, the emulator examined itself in opposition to dozens of particular person GCM single air temperature projections, not projection means. SI Figures S4-6, S4-Eight and S4-9 present the respectable match residuals stay near zero.

The checks confirmed past doubt that each examined GCM behaved as a linear extrapolator of GHG forcing. That invariable linearity of output conduct fully justifies linear propagation of error.

All through, Wealthy’s evaluation shows a radical and comprehensively mistaken view of the paper’s GCM evaluation.

The feedback that end his evaluation display that case.

For instance: “The one solution to arbitrate between emulators can be to hold out Monte Carlo experiments with the black containers and the emulators.” recommends an evaluation of precision, with no discover of the necessity for accuracy.

Repeatability over reliability.

If ever there was an indication that Wealthy’s method fatally neglects science, that’s it.

This subsequent paragraph actually nails Wealthy’s mistaken considering: “Frank’s paper claims that GCM projections to 2100 have an uncertainty of +/- no less than 15Ok. As a result of, by way of Part D, uncertainty actually means a measure of dispersion, which means that Equation (1) with the equal of Frank’s parameters, utilizing many examples of 80-year runs, would present an envelope the place proportion would attain +15Ok or extra, and proportion would attain -15Ok or much less, and proportion wouldn’t attain these bounds.”

First it was his Part E, not Part D, that supposed uncertainty is the dispersion of a random variable.

Second, Part IV above confirmed that Wealthy had misconstrued the JCGM dialogue of uncertainty. Uncertainty is just not error. Uncertainty is the interval inside which the true worth ought to happen.

Part D 6.1 of the JCGM establishes the excellence:

[T]he focus of this Information is uncertainty and never error.

And persevering with:

The precise error of a results of a measurement is, usually, unknown and unknowable. All one can do is estimate the values of enter portions, together with corrections for acknowledged systematic results, along with their customary uncertainties (estimated customary deviations), both from unknown likelihood distributions which are sampled via repeated observations, or from subjective or a priori distributions based mostly on the pool of accessible info; …

Unknown likelihood distributions sampled via repeated observations describes a calibration experiment and its end result. Included amongst these is the comparability of a GCM hindcast simulation of world cloud fraction with the recognized noticed cloud fraction.

Subsequent, the ±15 C uncertainty doesn’t imply some projections would attain “+15Ok or extra” or “-15Ok or much less.” Uncertainty is just not error. The JCGM is evident on this level, as is the literature. Uncertainty intervals aren’t error magnitudes. Nor do they suggest the vary of mannequin outputs.

The ±15 C GCM projection uncertainty is an ignorance width. It implies that one has no info in any respect in regards to the potential air temperature in 12 months 2100.

Supposing that uncertainty propagated by way of a serial calculation immediately implies a spread of potential bodily magnitudes is merely to disclose an utter ignorance of bodily uncertainty evaluation.

Wealthy’s mistake that an uncertainty statistic is a bodily magnitude can be commonplace amongst local weather modelers.

Amongst Wealthy’s Part H abstract conclusion, the primary is improper, whereas the second and third are trivial.

The primary is, “Frank’s emulator is just not good in regard to matching GCM output error distributions.” There are two errors in that one sentence.

The primary is that the GCM air projection emulator can certainly reproduce all the one air temperature projection runs of any given GCM. Wealthy’s second mistake is to suppose that GCM particular person run variation a few imply signifies error.

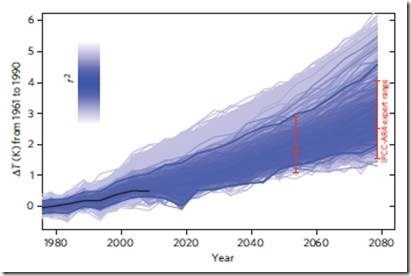

Relating to Wealthy’s first mistake, the Determine under is taken from Rowlands, et al., (2012). It exhibits 1000’s of particular person HadCM3L “perturbed physics” runs. Perturbed physics means the parameter units are assorted throughout their uncertainty widths. This produces an entire collection of other projected future temperature states.

Authentic Determine Legend: “Evolution of uncertainties in reconstructed global-mean temperature projections below SRES A1B within the HadCM3L ensemble.”

This “perturbed physics ensemble” is described as “a multi-thousand-member ensemble of transient AOGCM simulations from 1920 to 2080 utilizing HadCM3L,…”

Given information of the forcings, the GCM air temperature projection emulator might reproduce each single a kind of multi-thousand ensembled HadCM3L air temperature projections. Because the projections are anomalies, emulator coefficient a = Zero. The emulations would proceed by various solely the fCO₂ time period. That’s, the HadCM3L projections may very well be reproduced utilizing the emulator with just one diploma of freedom (see paper Figures 1 and 9).

A lot for, “not good in regard to matching GCM output [so-called] error distributions.”

Second, the variance of the unfold across the ensemble imply is just not error, as a result of the accuracy of the mannequin projections stays unknown.

Research of mannequin unfold, comparable to that of Rowlands, et al., (2012) reveal nothing about error. The dispersion of outputs reveals nothing however precision.

In calling that unfold “error,” Wealthy merely transmitted his lack of consideration to the excellence between accuracy and precision.

In mild of the paper, each single one of many HadCM3L centennial projections is topic to the very massive decrease restrict of uncertainty resulting from LWCF error, of the order ±15 C, at 12 months 2080.

The uncertainty within the ensemble imply is the rms of the uncertainties of the person runs. That’s not error, both. Or a suggestion of mannequin air temperature extremes. It’s the uncertainty interval that displays the full unreliability of the GCMs and our whole ignorance about future air temperatures.

Wealthy wrote, “The “systematic squashing” of the +/-Four W/m^2 annual error in LCF contained in the GCMs is a matter of which I for one was unaware earlier than Pat Frank’s paper.

The implication of feedback by Roy Spencer is that there actually is one thing like a “magic” part R3(t) anti-correlated with R2(t), … GCM specialists would be capable to affirm or deny that chance.”

One other mistake: the ±Four Wm⁻² is just not error. It’s uncertainty: a statistic. The uncertainty is just not squashed. It’s ignored. The unreliability of the GCM projection stays regardless of that errors are made to cancel within the calibration interval.

GCMs do deploy offsetting errors, however studied mannequin tuning has no influence on simulation uncertainty. Offset errors don’t enhance the underlying bodily description.

Usually, error (not uncertainty) in lengthy wave cloud forcing is offset by an opposing error briefly wave cloud forcing. Tuning permits the calibration goal to be reproduced, but it surely offers no reassurance about predictive reliability or accuracy.

Normal conclusions:

Your complete evaluation has no crucial pressure.

The proposed emulator is constitutively inapt and tendentious.

Its derivation is mathematically incoherent.

The derivation is dimensionally unsound, abuses operator arithmetic, and deploys unjustified assumptions.

Offsetting calibration errors are incorrectly and invariably claimed to advertise predictive reliability.

The Stefan-Boltzmann equation is inverted

Operators are improperly handled as coefficients.

Accuracy is repeatedly abused and ejected in favor of precision.

The GCM emulator (paper eqn. 1) is fatally confused with the error propagator (paper eqn. 5.2)

The analytical focus of the paper is fatally misconstrued to be mannequin means.

The difficulties of measurement error or mannequin error is assumed away, by falsely and invariably asserting all error to be random.

Uncertainty statistics are wrongly and invariably asserted to be bodily error.

Systematic error is falsely asserted to be a hard and fast fixed bias offset.

Uncertainty in temperature is falsely construed to be an precise bodily temperature.

Advert hoc assumptions about error normality are empirically unjustified.

The JCGM description of normal uncertainty variance is self-advantageously misconstrued.

The described use of rulers or thermometers is unrealistic.

Readers are suggested to learn and report false precision.

Appendix: A dialogue of Error Evaluation, together with the Systematic Selection

Wealthy additionally posted a remark below his “What do you imply by “imply” critique right here making an attempt to indicate that systematic error can’t be included in an uncertainty variance.

Feedback closed on the thread earlier than I used to be capable of end a crucial reply. The topic is vital, so the reply is posted right here as an Appendix.

In his remark, Wealthy assumed uncertainty to be the dispersion of a random variable with imply b and variance s². He concluded by claiming that an uncertainty variance can’t embody bias errors.

Bias errors are one other title for systematic errors, which Wealthy represented as a non-zero imply of error, ‘b.’

Under, I am going by way of numerous related instances. They present that the imply of error, ‘b’, by no means seems within the system for an error variance. Additionally they present that the systematic errors from uncontrolled variables have to be included in an uncertainty variance.

That’s, the muse of Wealthy’s derivation, which is:

“the uncertainty of a sum of n unbiased measurements with respective [error] means bᵢ and variances vᵢ is that given by JCGM 5.1.2 with unit differential: sqrt(sumᵢ g(vᵢ,bᵢ)²) the place v = sumᵢ vᵢ, b = sumᵢ bᵢ.”

is improper.

On condition that mistake, the remainder of Wealthy’s evaluation there additionally fails, as demonstrated within the instances that observe.

Curiously, the imply of error, ‘b,’ doesn’t enter within the variance equation (10) in JCGM 5.1.2, both.

++++++++++++

For any set of n measurements xᵢ of X, xᵢ = X + eᵢ, the place eᵢ is the full error within the xᵢ.

Complete error eᵢ = rᵢ + dᵢ the place rᵢ = random error and dᵢ = systematic error.

The errors eᵢ can’t be recognized except the right worth of X is thought.

In what follows “sumᵢ” means sum over the collection of i the place i = 1 ® n, and Var[x] is the error variance of x.

Case 1: X is thought.

1.1) When X is thought, and solely random error is current.

The experiment is analogous to an excellent calibration of technique.

Then eᵢ = xᵢ – X, and Var[x] = [sumᵢ(xᵢ – X)²]/n = [sumᵢ(eᵢ)²]/n. On this case eᵢ = rᵢ solely, as a result of systematic error = dᵢ = Zero.

Then [sumᵢ (eᵢ)²]/n = [sumᵢ(rᵢ)²]/n.

For n measurements of xᵢ the imply of error = b = sumᵢ(eᵢ)/n.

When solely random error contributes, the imply of error b tends to zero at massive n.

So, Var[x] = sumᵢ[(xᵢ – X)²]/n = sumᵢ[(X + rᵢ) -X)²]/n = sumᵢ[(rᵢ)²]/n

and the usual deviation describes a standard dispersion centered round Zero.

Thus, when error is a random variable, the imply of error ‘b’ doesn’t seem within the variance.

In case 1.1, Wealthy’s uncertainty, sqrt[sumᵢ g(vᵢ,bᵢ)²] is just not right and in any occasion ought to have been written sqrt[sumᵢ g(sᵢ,bᵢ)²].

1.2) X is thought, and each random error and fixed systematic error are current.

When the dᵢ are current and fixed, then dᵢ = d for all i.

The imply of error = ‘b,’ = sumᵢ [(xᵢ – X)]/n = sumᵢ [(X + rᵢ + d) – X]/n = sumᵢ [(rᵢ+d)]/n = nd/n + sumᵢ [(rᵢ)/n], which matches to ‘d’ at massive n.

Thus, in 1.2, b = d.

And: Var[x] = sumᵢ[(xᵢ – X)²]/n = sumᵢ/n = sumᵢ [(rᵢ+d)²]/n, which produces a dispersion round ‘d.’

Thus, as a result of X is thought and ‘d’ is fixed, ‘d’ might be discovered precisely and subtracted away.

The imply of the ultimate error, ‘b’ by no means enters the variance.

That’s, when b => d = an actual quantity fixed that may be recognized and might be corrected out of subsequent measurements of samples.

This final stays true in different laboratory samples the place the X is unknown, as a result of the tactic has been calibrated in opposition to an identical pattern of recognized X and the methodological ‘d’ has been decided. That ‘d’ is at all times fixed is an assumption, i.e., that experimenter error is absent and the methodology is equivalent.

Case 2: X is UNknown, and each random error and systematic error are current

Then the imply of xᵢ = [sumᵢ (xᵢ)/n] = x_bar.

As earlier than, let xᵢ = X + eᵢ = X + rᵢ + dᵢ.

Var[x] = sumᵢ[(xᵢ – x_bar)²]/(n-1), and the SD describes a dispersion round x_bar.

2.1) Systematic error = Zero.

If dᵢ = Zero, then eᵢ = rᵢ is random, and x_bar turns into measure of X at massive n.

Var[x] = sumᵢ[(xᵢ – x_bar)²]/(n-1) = sumᵢ[(X + rᵢ) – (X+r_r)]²/(n-1) = sumᵢ[(rᵢ – r_r)]²/(n-1), the place r_r is the residual of error in x_bar over interval ‘n’.

As above, the imply of error ‘b’ = sumᵢ[(xᵢ – x_bar)]/n, = sumᵢ[(X+rᵢ) – (X+r_r)]/n = sumᵢ[(rᵢ – r_r)]/n = [n(r_r)/n + sumᵢ(rᵢ)/n], and b = r_bar + r_r, the place r_bar is the typical of error over the ‘n’ interval.

Then b is an actual quantity, which once more doesn’t enter the uncertainty variance and which approaches zero at massive n

2.2) If dᵢ is fixed = c

Then xᵢ = X + rᵢ + c.

The error imply = ‘b’ = sumᵢ[(xᵢ – x_bar)]/n = sumᵢ[(X + rᵢ + c) – (X + c + r_r)]/n, and b = sumᵢ[(rᵢ -r_r)/]n = sumᵢ(rᵢ)/n – n(r_r)/n, and b = (r_bar – r_r), whereby the indicators of r_bar and r_r are unspecified.

The Var[x] = sumᵢ[(xᵢ – x_bar)²]/(n-1) = sumᵢ [(X+rᵢ+c) – (X+r_r+c)]²/(n-1) = sumᵢ[(rᵢ – r_r)²]/(n-1).

The variance describes a dispersion round r_r.

The imply error, ‘b’ doesn’t enter the variance.

Case three; X is UNknown and systematic error, dᵢ, varies resulting from uncontrolled variables.

Uncontrolled variables imply that each measurement (or each mannequin run) is impacted by inconstant deterministic perturbations, i.e., inconstant causal influences. These modify the worth of every end result with unknown biases that adjust with every measurement (or mannequin run).

Any measurement, xᵢ = X +rᵢ + dᵢ, and dᵢ is a deterministic, non-random variable, and often non-zero.

Over two measurement sequences of quantity n and m, the imply error = b = sumᵢ(rᵢ + dᵢ)/n, = bn, and bm = sumj(rj + dj)/m and bn ≠ bm, even when interval n equals interval m.

Var[x]n = sumᵢ[(xᵢ – x_bar-n)²]/(n-1), the place x_bar-n is x-bar over sequence n.

Var[x]m = sumj[(xj – x_bar-m)²/](m-1)

and Var[x]n = sumᵢ[(xᵢ – x_bar-n)²]/(n-1) = sumᵢ [(X+rᵢ+dᵢ)-(X+r_r+d_bar-n)]²/(n-1) = sumᵢ[(rᵢ – r_r + dᵢ – d_bar-n)²]/(n-1) = sumᵢ[rᵢ -(d_bar-n + r_r – dᵢ)]²]/(n-1).

Likewise, Var[x]m = sumj[(rj – (d_bar-m + r_r – dj)]²]/(m-1).

Thus, neither bn nor bm enter into both Var[x], contradicting Wealthy’s assumption.

The dᵢ, dj enter into the full uncertainty of the x_bar-n, x_bar-m. Additional, the variation of dᵢ, dj with every i, j implies that the dispersion of Var[x]n,m will embody the dispersion of the dᵢ, dj. The deterministic explanation for dᵢ, dj will very probably make their distribution non-normal.

That’s, when systematic error is inconstant resulting from uncontrolled variables, dᵢ will differ with every i, and can produce a dispersion represented by the usual deviation of the dᵢ.

This negates the declare that systematic error can’t contribute an uncertainty interval.

Additionally, x_bar-n ≠ x_bar-m, and [dᵢ – (d_bar – n] ≠ [dj – (d_bar-m].

Due to this fact Var[x]n ≠ Var[x]m, even at massive n, m and together with when n = m over well-separated durations.

Case Four: X is thought, and dᵢ varies resulting from uncontrolled variables.

It is a case of calibration in opposition to a recognized X when uncontrolled variables are current, and mirrors the calibration of GCM-simulated world cloud fraction in opposition to noticed world cloud fraction.

Four.1) A collection of n measurements.

Right here, eᵢ = xᵢ – X, and Var[x] = sumᵢ[(xᵢ – X)²]/n = sumᵢ(eᵢ)²/n = sumᵢ[(rᵢ + dᵢ)²]/n = [u(x)²].

As eᵢ = rᵢ + dᵢ, then Var[x] = sumᵢ(rᵢ + dᵢ)²]/n, however the values of every rᵢ and dᵢ are unknown.

The denominator is ‘n’ quite than (n+1) as a result of X is thought and levels of freedom aren’t misplaced to a imply in calculating the usual variance.

For n measurements of xᵢ the imply of error = b = sumᵢ(eᵢ)/n = sumᵢ(rᵢ + dᵢ) = variable relying on ‘n,’ as a result of dᵢ varies in an unknown however deterministic means throughout n.

Nonetheless, X is thought, due to this fact (xᵢ – X) = eᵢ is thought to be the true and full error within the i-th measurement.

At massive n, the sumᵢ(rᵢ) turns into negligible. and Var[x] = sumᵢ[(eᵢ)²/n] = sumᵢ[(dᵢ)²/n] = [u(x)²] on the restrict, which may be very probably a non-normal dispersion.

The systematic error produces a dispersion as a result of the dᵢ differ. At massive n, the uncertainty reduces to the interval resulting from systematic error.

Th imply of error, ‘b,’ doesn’t enter the variance.

The declare that systematic error can’t produce an uncertainty interval is once more negated.

5) X is UNknown and dᵢ varies resulting from uncontrolled variables. The experimental pattern is bodily much like the calibration pattern in Four.

5.1) Let xᵢ’ be the i-th of n measurements of experimental pattern 5.

The estimated imply of error = b = sumᵢ(x’ᵢ – x’_bar)/n

When x’ᵢ is measured, and X’ is unknown, Var[x’] = sumᵢ[(x’ᵢ – x’_bar)²]/(n-1).

= sumᵢ[(X’+r’ᵢ+d’ᵢ) – (X’ + d’_bar + r’-r)]²/(n-1).

and Var[x’] = sumᵢ[r’ᵢ + (d’ᵢ – d’_bar – r’_r))²]/(n-1).

Once more, the imply of error b’ doesn’t enter into the empirical variance.

And once more, the dispersion of the implicit dᵢ contributes to the full uncertainty interval.